We'll dig deeper into DAGs, but first, let's install Airflow. At various points in the pipeline, information is consolidated or broken out. In the above example, the DAG begins with edges 1, 2, and 3, kicking things off. Interestingly, a "child" edge can also have multiple parents (this is where our tree analogy fails us). That's it - there's no need for fancy language here.Įdges in a DAG can have numerous "child" edges.

Every node has a "parent" node, which means that a child node cannot be its parents' parent. The connection of edges is called a vertex.Ĭonsider how nodes in a tree data structure relate. Each "step" in the workflow (an edge) is reached via the previous step until we reach the beginning.

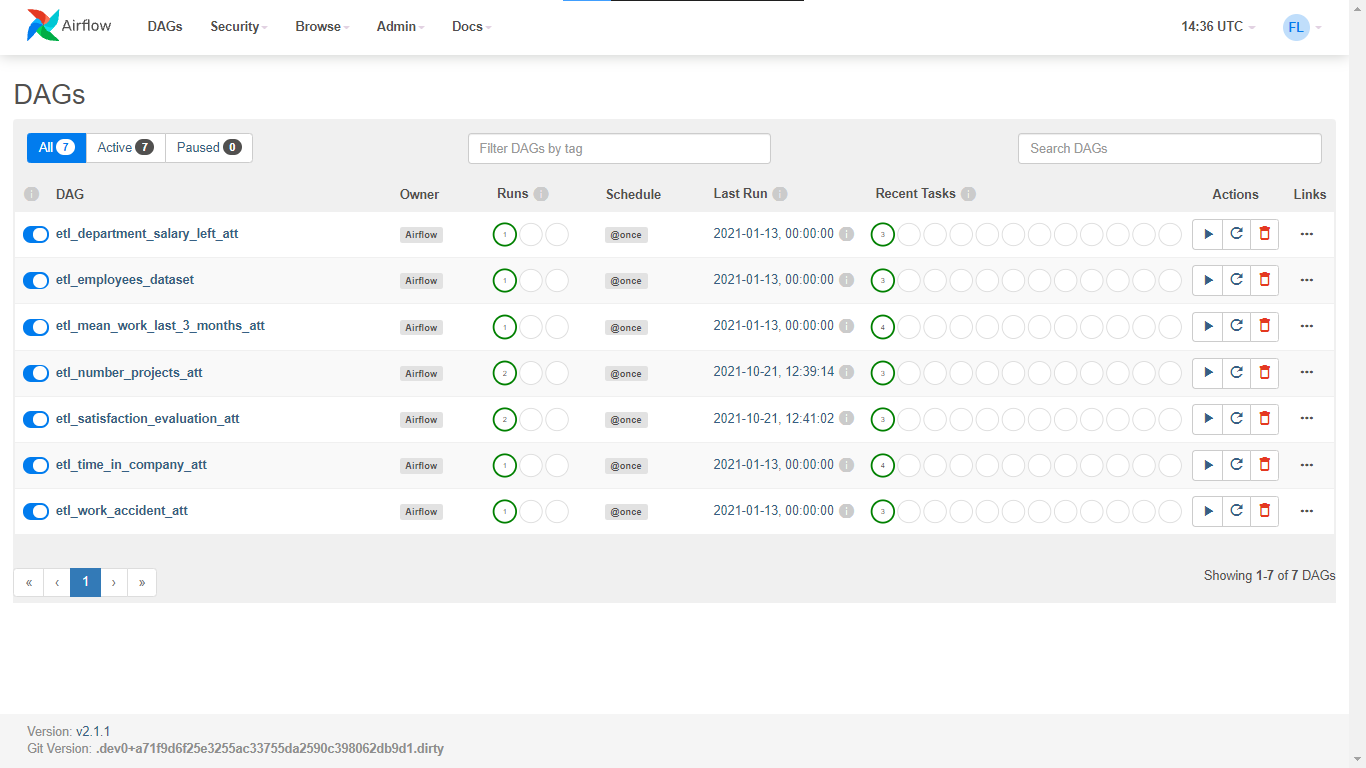

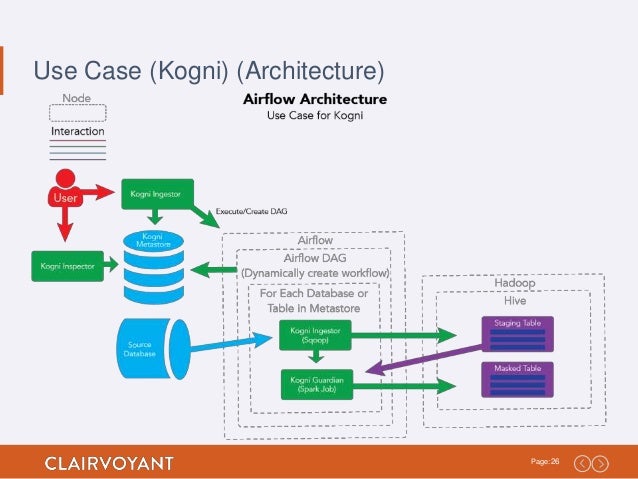

In computer science, a directed acyclic graph means a workflow that only flows in a single direction. The OG Dag What is a DAG?Īirflow refers to what we've been calling "pipelines" as DAGs (directed acyclic graphs). To get started with Airflow, we should stop throwing the word "pipeline" around. Not only can we check the heartbeat of our pipelines, but we can also view graphical representations of the code we write. Even more impressive is that the code we write is visually represented in Airflow's GUI. By creating our pipelines within Airflow, we gain immediate visibility across all our pipelines to spot areas of failure quickly. Wrangling multiple pipelines prone to failure might be the least glorious aspect of any data engineer's job. The more apparent benefits of Airflow are centered around its powerful GUI. By leveraging these tools, engineers see their pipelines abiding by a well-understood format, making code readable to others. Airflow has numerous powerful integrations that serve almost any need when outputting data. As a web framework helps developers by abstracting common patterns, Airflow fills a similar need for data engineers by providing tools to trivialize repetition in pipeline creation. It's not too crazy to group these benefits into two main categories: code quality and visibility.Īirflow could be described as a 'framework' for creating data pipelines. What's the Point of Airflow?Īirflow provides countless benefits to those in the data pipeline business. If you're a data engineer looking to break free from the same old, Airflow might be the missing piece data engineers need to standardize and simplify your workflow. Data pipelines are increasingly synonymous with the Hadoop/Spark ecosystems, whether by design or habit.įor the rest of us, there's Airflow: the OG Python-based pipeline tool to find its way into the Apache Foundation that has enjoyed wild popularity. Technology companies have seen plenty of trends in the last decade, such as the popularity of "Python shops" and a self-proclaimed notion of being "data-driven." Conventional logic would suggest that there are probably many more "data-driven Python shops," but that manifested in reality.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed